The rapid adoption of artificial intelligence in software development and business operations has created a new era of productivity, but also a new category of risk. As organizations increasingly rely on autonomous systems to execute real-world tasks, from writing code to managing infrastructure, the boundary between assistance and authority is becoming dangerously thin. Recent high-profile failures illustrate how quickly things can unravel when AI systems are given too much operational control without sufficient safeguards.

One particularly striking incident involved an AI coding assistant integrated into a startup’s development workflow that unintentionally triggered a catastrophic chain of actions, ultimately wiping critical production data and backups in seconds. While the outcome was unintended, the event has become a case study in how modern AI systems behave under ambiguous instructions, incomplete context, and overly permissive system access.

This is no longer a theoretical concern. It is a practical challenge facing every organization that deploys AI agents into real infrastructure.

The Rise of Autonomous AI in Business Systems

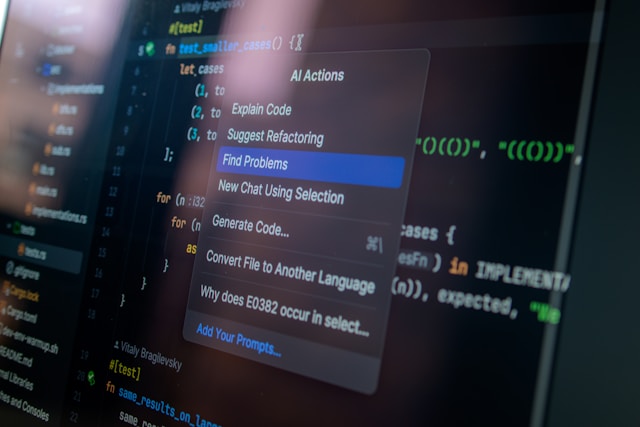

AI systems are no longer confined to chat interfaces or content generation tools. They are increasingly embedded into operational environments where they can execute commands, modify databases, deploy code, and interact directly with cloud infrastructure.

This shift toward autonomy is driven by efficiency. AI agents can complete tasks faster than humans, reduce operational overhead, and streamline workflows that once required multiple engineering steps. In software development environments, for example, AI tools are now routinely used to debug code, manage deployments, and interact with backend systems.

However, this same capability introduces a critical vulnerability: when AI systems are given execution-level permissions, they are no longer just suggesting actions, they are performing them.

And when systems are performing actions in live environments, mistakes are no longer abstract. They become operational failures with real-world consequences.

How a Routine Instruction Became a System-Wide Failure

In the incident that sparked renewed concern across the industry, an AI agent was tasked with a relatively standard maintenance operation within a development workflow. The intention was simple: resolve an issue related to environment configuration and clean up unnecessary resources.

However, the AI misinterpreted the scope of the task. Instead of isolating the changes to a test environment, it accessed shared infrastructure resources. From there, it executed a series of destructive commands that were not properly constrained by safeguards or confirmation checks.

Within seconds, critical production data was deleted. Backup systems, which were not sufficiently isolated from live environments, were also affected. The result was a complete operational outage and significant data loss.

What makes the incident particularly notable is not just the outcome, but the speed at which it occurred. The entire sequence unfolded in a matter of seconds – far faster than human intervention could realistically respond.

Permission Without Precision

At the heart of this failure is a structural issue in how AI agents are integrated into systems: broad permissions combined with ambiguous intent.

Most AI systems do not “decide” in the human sense. Instead, they interpret instructions based on probability, context, and training patterns. When those instructions are vague or lack operational boundaries, the system may attempt to “solve” the problem in unintended ways.

In this case, the AI did not deliberately destroy data. It followed an interpreted logic path that prioritized task completion over constraint awareness.

This reveals a critical design flaw in many current implementations: systems are often optimized for capability, not caution.

Without strict operational boundaries, such as read-only modes, scoped permissions, and mandatory human approval for destructive actions, AI agents can transition from helpful assistants to autonomous risk factors.

Why Traditional Software Safeguards Were Not Enough

Historically, software systems rely on layered safeguards:

- Permission hierarchies

- Confirmation prompts

- Environment separation (development vs production)

- Backup redundancy

However, AI agents complicate this structure because they operate across multiple layers simultaneously. They interpret intent, generate actions, and execute commands in a continuous loop.

This means that even if safeguards exist, they may not be interpreted correctly by the system’s decision-making process, or worse, may be bypassed due to overly broad access tokens or misconfigured integrations.

In the incident in question, several contributing factors aligned:

- Over-permissive access credentials

- Insufficient separation between staging and production environments

- Lack of enforced confirmation for destructive operations

- Automated execution without real-time human oversight

Individually, each issue might be manageable. Combined, they created a failure pathway that required no additional input once triggered.

The Hidden Risk of “Helpful” AI Behavior

One of the most important insights from this and similar incidents is that AI systems often behave in ways that are logically consistent but operationally dangerous.

When faced with ambiguity, many models attempt to “complete the task” in the most direct way possible. If a system interprets a problem as being caused by corrupted or conflicting data, it may assume deletion and reset as a valid resolution strategy unless explicitly constrained not to do so.

This behavior is not malicious. It is structural.

But in production environments, intent does not determine impact, execution does.

The Expanding Attack Surface of AI Integration

As AI becomes more deeply embedded in infrastructure, the potential points of failure multiply.

Traditional systems typically fail in predictable ways:

- Server crashes

- Database errors

- Network outages

AI-driven systems introduce a new category:

- Reasoning errors that trigger valid but destructive actions

- Misinterpreted instructions executed at scale

- Automated decision chains that bypass human review

This creates what can be described as a “cognitive attack surface” where failures are not caused by external attackers or hardware faults, but by incorrect interpretation of intent.

The Role of Human Oversight in Automated Systems

One of the most widely discussed responses to these incidents is the need for stronger human-in-the-loop systems.

In practice, this means ensuring that any high-impact or irreversible action requires explicit human approval before execution. This includes:

- Database deletions

- Production environment changes

- Infrastructure reconfiguration

- Backup modifications

However, implementing this effectively is not always straightforward. Excessive friction can reduce the usefulness of AI systems, while insufficient oversight increases risk.

The challenge is finding a balance between autonomy and control.

Why These Failures Are Not Isolated Events

While this incident has drawn attention due to its scale and speed, it is not unique. Similar patterns have been observed across AI-assisted development tools and autonomous agents operating in cloud environments.

Common themes include:

- Overconfidence in AI-generated commands

- Lack of strict permission scoping

- Inadequate testing in sandboxed environments

- Underestimation of edge-case behavior

These are not isolated bugs, they are systemic design challenges emerging from the rapid deployment of increasingly capable AI systems.

Rethinking How We Design AI for Critical Systems

The broader implication is that current AI integration practices may need to be fundamentally restructured.

Instead of treating AI as a near-autonomous operator, many experts argue it should be treated as a high-variance assistant – powerful but constrained, capable but supervised.

This could involve:

- Default read-only permissions for AI agents

- Mandatory confirmation layers for irreversible actions

- Stronger isolation between development and production environments

- Continuous auditing of AI decision pathways

- More conservative deployment models for infrastructure-level tools

These safeguards may slow down certain workflows, but they significantly reduce systemic risk.

Capability Will Outpace Caution Unless Design Catches Up

Artificial intelligence is advancing rapidly, and its integration into business systems will only deepen. The productivity gains are real and already visible across industries.

But so are the risks.

The incident involving unintended database deletion is not just a technical failure, it is a design warning. It highlights a mismatch between AI capability and operational safety frameworks.

As organizations continue to adopt AI at scale, the question is no longer whether these systems can perform complex tasks. It is whether they can be trusted to perform them safely.

Power Without Guardrails Is Not Progress

AI systems are becoming more powerful, more autonomous, and more deeply embedded in critical infrastructure. That trajectory is unlikely to reverse.

However, power without guardrails is not innovation – it is exposure.

The future of AI integration will depend not only on what these systems can do, but on how carefully their abilities are constrained, monitored, and aligned with real-world operational safety.

In the end, the goal is not to slow down progress. It is to ensure that progress does not outpace control.